Research

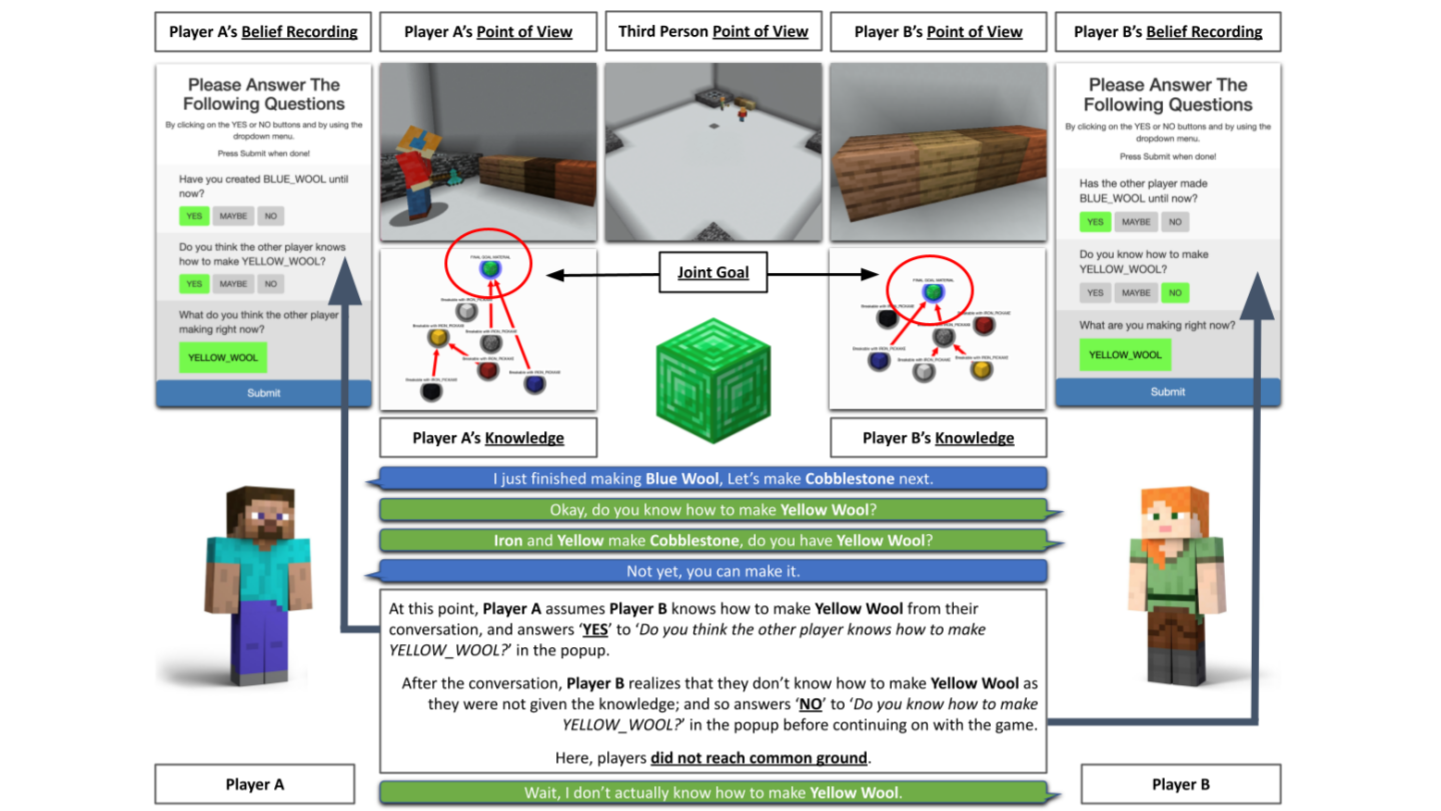

Language use in human communication is grounded to physical and social environments, shaped by our goals, connected to our shared experiences, and requires our understanding of each other’s abilities, knowledge, and beliefs. SLED develops computational models for natural language processing that is sensorimotor-grounded, pragmatically-rich, and cognitively-motivated.